"Thanks to our technology centred on social learning / mobile learning / micro learning / immersive learning, you will triple your engagement and memorisation rates!" We'd wager that you've read this sentence dozens of times… and that you'll hear it again with every new pedagogical innovation. Yet are we actually any further forward on the question of training's impact on learners' real-world practices? Not necessarily — and here's why.

Faced with the complexity of e-learning, the temptation to oversimplify

Training projects are complex, with numerous organisational constraints to account for: learner availability, budget, timelines, needs, stakeholders, and so on. That alone would be more than enough to deal with, and it already occupies a significant portion of L&D managers' time. Now add the pedagogical challenges of training: what is the best approach given your cohort's level? How can you ensure that their real-world practices genuinely change? How can you sustain skill development after the training ends?

That's a lot of questions for which we are tempted to find simple… and universal answers.

We already tend to favour the most easily measurable indicators to track our actions: completion rates and learner satisfaction. These give us a quick — if approximate — answer to our questions. Because properly measuring skill development takes time, expertise and resources, and there is no ready-made solution (see our article on measuring pedagogical effectiveness).

Thanks to its simplicity, this engagement metric — despite being far removed from genuine pedagogical effectiveness — has become the e-learning standard. Gradually, we stop asking how to train well and start asking how to engage well. And that is where the gimmick learning approach enters the scene.

Is gimmick learning the solution?

Gimmick learning (not to be confused with machine learning) encompasses social learning, peer learning, adaptive learning, mobile learning… and all technology-centred approaches to training. What they share is that they feed on our need to simplify the training problem and on our intuitions ("playing is enjoyable, so if my learners follow a serious game, they'll be more engaged").

And in certain configurations you will encounter, that will indeed be the case: to learn how to solve complex tasks, it can be useful to work in groups to distribute the learning effort across multiple minds — provided that group work is well-structured (ref). For delivering highly segmented content to a heterogeneous group of learners, adaptive learning will be ideal for bringing everyone up to the same level of mastery.

But beware of the hammer syndrome: when you have a hammer in your hand, every problem looks like a nail. This effect is particularly strong with gimmick learning approaches, since they rely precisely on an oversimplification of learning. Yet there are many cases where these modalities no longer work and can even have a negative effect on learning. Working in groups or exchanging with peers has a cost in terms of cognitive resources (see our article Mental overload, the enemy of learning) and research shows this cost must be worth it: for "simple" tasks, learners will progress more if they work alone (ref). Similarly, although adaptive learning has on average a positive effect on learning, there are cases where its effect is weak or even negligible — when your content is passive or adapted for the wrong reasons, for example based on learning styles rather than mastery level. It is therefore important to keep in mind that the problem to be solved is often greater than it appears, and that the success of any modality depends on how well it is tailored to learners' needs.

The right learning modality at the right time, thanks to pedagogical artificial intelligence

Let us recap. On one side, we have organisations facing complex training challenges, with tight budget, time and resource constraints that leave little room for fine-grained analysis. On the other, a galaxy of technologies, each effective but only in a limited set of cases. Are we facing an unsolvable dilemma? Until recently, the answer tended to be yes — as evidenced by the success of gimmick learning approaches and engagement as the reigning success metric.

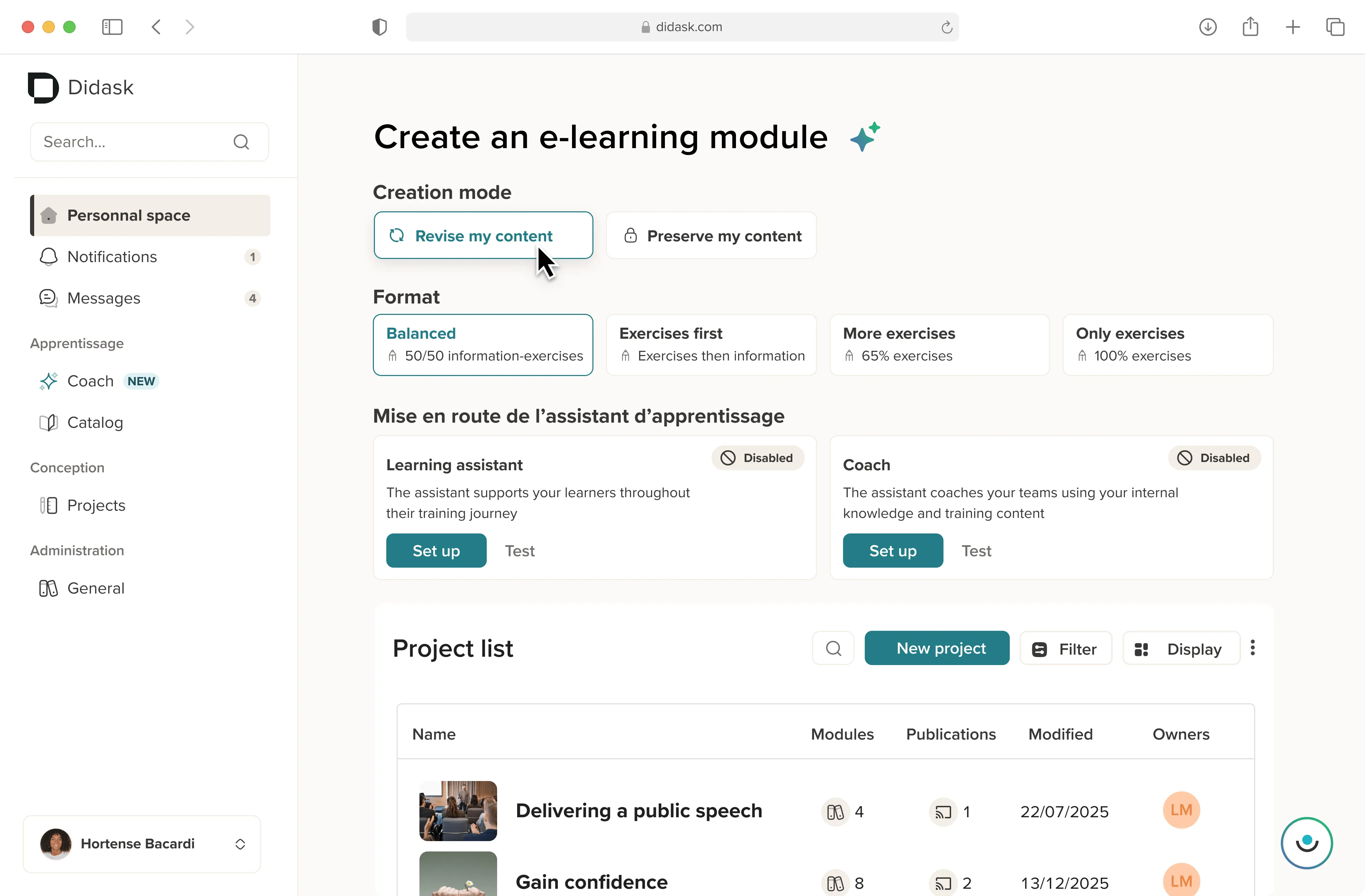

But today, the rise of the latest machine learning techniques has considerably accelerated the mass transposition of documents into e-learning. Within Didask's next-generation LMS platform, we have drawn on these techniques to develop our pedagogical artificial intelligence, which — starting from your source content (extracted from a document, an exchange on a company messaging platform…) — automatically identifies the challenges your learners face and matches the appropriate learning modalities to them.

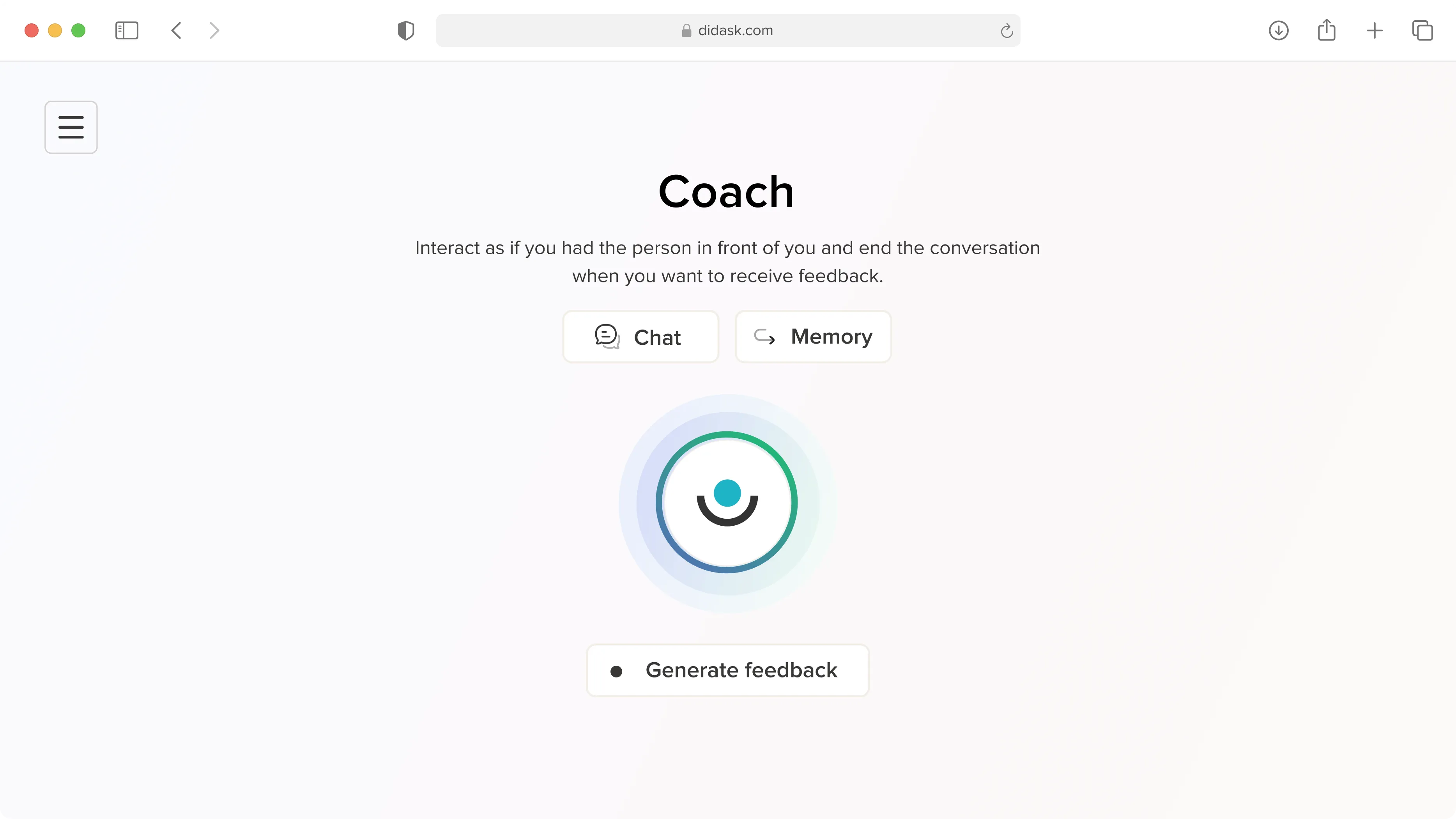

Do they have preconceptions to deconstruct? It will suggest an exercise where the learner can make mistakes for themselves before receiving feedback that gradually guides them towards the correct understanding. Do they need to memorise a large number of independent elements, as in compliance training? It will suggest flashcards, a high-intensity revision format to consolidate their learning. The good news is that this works regardless of your topic — from CSR to soft skills, and leadership.

Each modality arrives not as the universal answer to the learner's needs, but as exactly the right one for that precise moment. A promise — this one kept — of time savings, efficiency and genuine impact.

.png)