Measuring the impact of a training course is an exercise that everyone pretends to do. End-of-course satisfaction questionnaires, completion rates, self-evaluations... There is no shortage of indicators.

However, do these metrics really reflect the Increase in learners' skills ? Spoiler: not really.

At Didask, we conducted a comparative impact study to measure what really matters: the ability of learners to mobilize their new skills in a real situation. The results revealed three counterintuitive lessons that challenge many beliefs about online training.

How we conducted our impact study

Our objective was simple: to measure the real impact of different educational formats on three key dimensions.

- Learner satisfaction

- The perceived increase in skills (what learners think they have learned)

- The real increase in skills (what they are actually capable of doing)

To do this, we compared three groups of learners following the same content on inclusion and diversity in business, but in three different formats.

Group 1 - The classic LMS : an existing course on a competing LMS representative of the market. Nice design, well-presented content, but few engaging exercises, limited feedback, and poorly organized information without concrete situations.

Group 2 - The top-down format : a video with exactly the same content, without any interaction. The most passive format possible.

Group 3 - The Didask format : the same content, but restructured according to our pedagogical biases. Frequent exercises with immediate feedback, consideration of the most common cognitive biases, scenarios with personalized feedback.

The detailed results of this study will be the subject of a dedicated article to be published soon. We will therefore not reveal here the complete figures on the performances of each group (but we have heard that Didask would lead to better memory results in particular).

What we are interested in today are the transversal lessons that this study has given us about how adults actually learn. Three counterintuitive discoveries that call into question the indicators that are usually used to measure the effectiveness of training.

Lesson 1 - Satisfaction does not predict learning

As training professionals, we have a keen eye. We immediately identify outdated and top-down content versus a dynamic and interactive format. We set our expectations high.

But here's what the data taught us: as long as the subject is at least attractive and the presentation is correct, even the most basic formats can seem satisfying to learners.

In our study, the three groups had an average satisfaction rate of around 4/5. Whether they followed a simple video, a classic course, or an interactive Didask course, it didn't matter: the difference was not statistically significant (F (2, 57) = 2.407, p = 0.099, p = 0.099, for statistical enthusiasts).

Even more troubling : this satisfaction was completely uncorrelated with the performances measured. The correlations oscillated between 0.11 and 0.18 depending on the measurements, i.e. not statistically significant.

Concretely? A learner could love their training (recommend it, enjoy taking it, never feel like giving up) while being unable to apply what they had just learned.

The link with cognitive science

This phenomenon is explained in particular by the cognitive fluidity : when information seems easy to process (pleasant video, well-designed slides), our brain interprets this ease as a sign of understanding.

Involvement for training managers

Course satisfaction is not a sufficient indicator that learners will be able to implement what they have seen. Let's stop relying only on smileys or stars at the end of training to validate the effectiveness of a device.

Lesson 2 - We are poor judges of our own learning

Second pedagogical slap : not only can learners be satisfied without having learned, but in addition, they think they have progressed enormously when this is not the case.

In our study, participants rated the improvement in their skills as high. The majority of responses were between 4 and 5/5 for all three groups. They were convinced that they were now able to identify inclusive businesses and identify best practices.

Except that their measured performances told a completely different story. They were actually quite low, well below what they thought they could control.

And the worst? Thinking that you had learned a lot was not at all predictive of real performance. The correlations were around 0.17, which was statistically insignificant.

A learner could feel highly competent after their training... and fail miserably to mobilize these skills in the face of a concrete situation.

The link with cognitive science

Welcome to the world of The illusion of competence And of The Dunning-Kruger effect. The more novice we are on a subject, the less able we are to assess our own level. We lack precisely the metacognitive skills necessary to judge our mastery. As a result, we consistently overestimate what we have just learned.

Involvement for training managers

Measuring the rise in declarative skills is in reality not very informative of the real increase in skills. Self-evaluations at the end of the course (“Do you think you are capable of...”) are not worth much. You have to test learners in situations, with exercises that reveal what they really know how to do.

Lesson 3 - Without strong incentives, even motivated learners remain on the surface

Third discovery, the most surprising : learners, even motivated, tend to process information in a superficial way.

In our Didask group, we analyzed in detail the responses to a simulation activity. Learners needed to identify areas for improvement in a job ad to make it more inclusive.

Spontaneously, the answers remained very general. “There is gendered vocabulary”, “The ad lacks inclusiveness”, without further precision.

It was only when the system asked targeted follow-up questions that learners deepened their analysis. For example: “You indicated the presence of overly gendered vocabulary, on what part of the ad do you base your response and what consequences could this have on potential candidates?”

Faced with this reminder, the learners then detailed their reasoning, quoted specific passages, and explained the mechanisms involved.

One could interpret these superficial responses as an overall lack of motivation. But the analysis of logs and interactions shows the opposite. The time spent on the various contents was consistent with what was expected (no quick overview). Learners even answered optional questions.

The link with cognitive science

This phenomenon is explained by the model of levels of treatment by Craik and Lockhart, and more recently by taxonomy ICAP (Interactive, Constructive, Active, Passive). Our brain naturally favors the cognitive economy: processing information at the minimum level sufficient to move forward. Without explicit incentives to actively manipulate concepts, we remain in a “passive” or at best “active” mode, without reaching the “constructive” level necessary for sustainable learning.

Involvement for training managers

Without a format that requires thorough analysis and manipulation of the information presented, learners risk remaining on a superficial mastery of concepts. Just asking questions is not enough. We need devices that require thinking, justifying, applying, and correcting.

What an LMS must really allow to generate skills development

These three lessons converge on the same conclusion: A good LMS is judged not only by its design or the satisfaction it generates, but by its ability to force profound cognitive engagement.

Concretely, here is what a learning platform should make it possible to guarantee a real increase in skills.

1. Test regularly with immediate feedback to improve the metacognition of learners. Since they are poor judges of their own progress, they need to be provided with concrete opportunities to test what they really know. Feedback then becomes a realistic self-assessment tool.

2. Propose formats that guide the learner towards a profound manipulation of information. Not just multiple choice MCQs or closed-ended questions. Exercises that require you to analyze, justify, argue, with reminders that encourage you to go deeper.

3. Confront learners with the mistakes they would make in the field, not just check their theoretical understanding. Situations should be realistic enough to reveal false beliefs and mental shortcuts that are problematic in a real context.

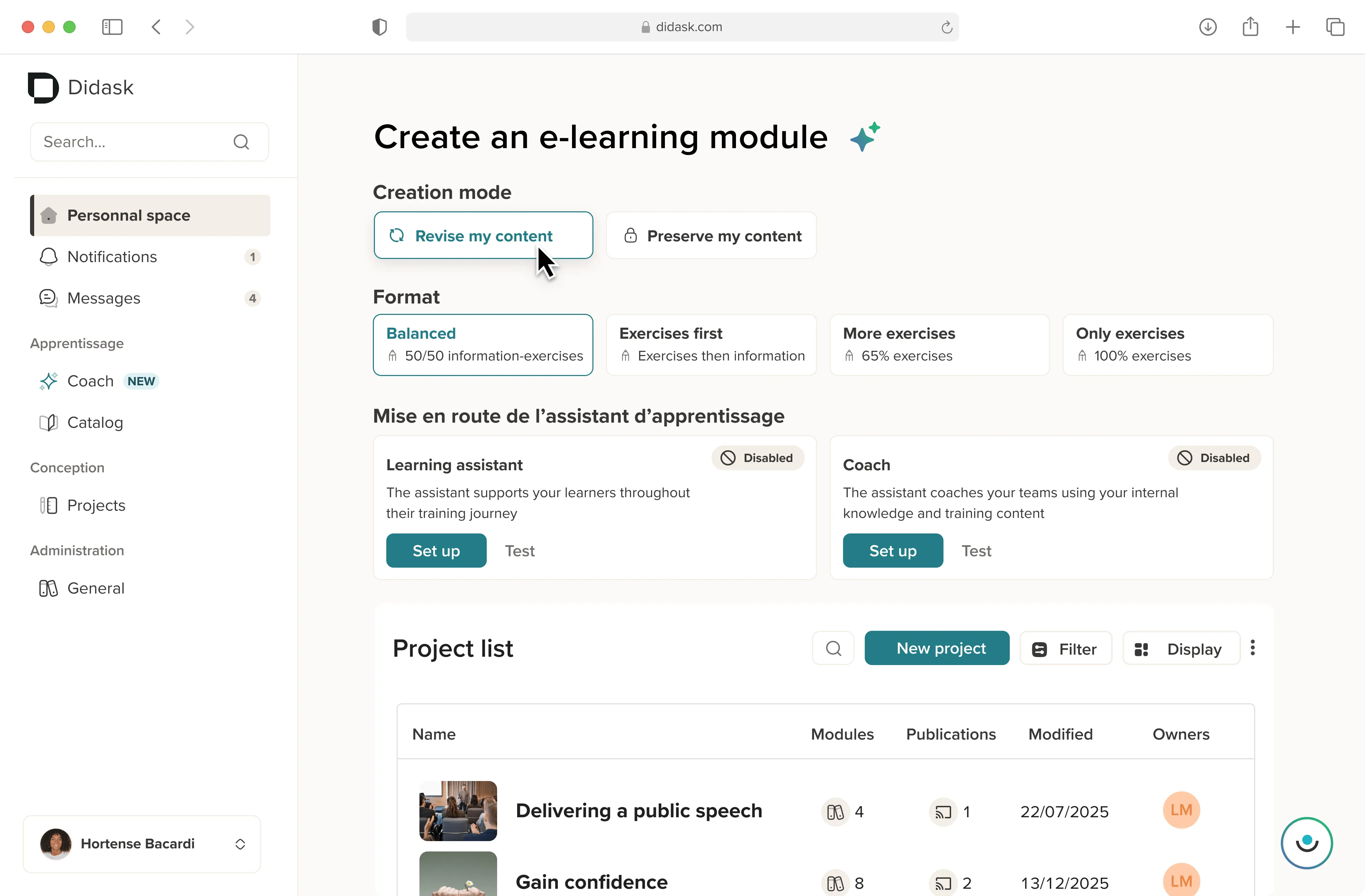

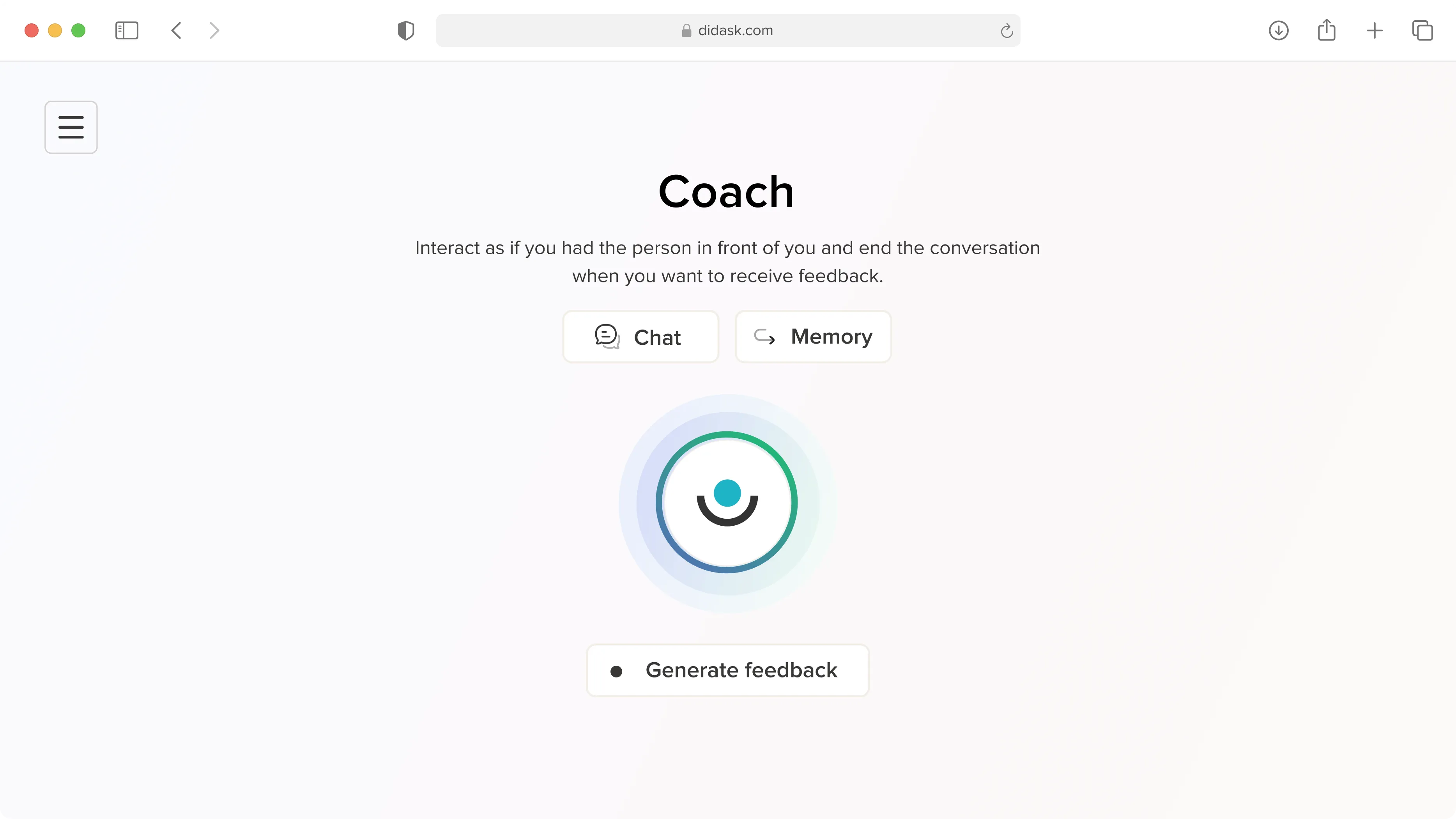

This is exactly what we designed at Didask: an LMS and an authoring tool that integrates these principles into each educational grain. Not because of ideology, but because the data shows us that this is what works.

.webp)

.webp)

.png)